ChatGPT launched November 30 and quickly amassed an astonishingly large user base by January. Occam data suggests that usage has only grown from there, with awareness among respondents rising from 12% in January to 30% in March (Figure 1).

Despite awareness reaching 30%, only 7% of respondents have signed up for a ChatGPT account. Of those who have set up an account, usage is generally infrequent, with less than half engaging with the platform more than once a week (Figure 2), which suggests that for many, ChatGPT remains more of a curiosity than a tool integrated into daily workflows. As ChatGPT evolves and its practical applications become increasingly evident, occam will monitor for shifts in respondent behavior towards more frequent usage.

Occam data shows that younger adults are substantially more likely to have opened a ChatGPT account than older respondents (Figure 3). Participation among seniors is low enough that ChatGPT penetration in the 30-44 age cohort is about 8x as high as with the 65+ cohort. Interestingly, though we expected to see the highest penetration in the youngest cohort of adults, penetration in the 30-44 cohort somewhat exceeds penetration in the 18-29 cohort.

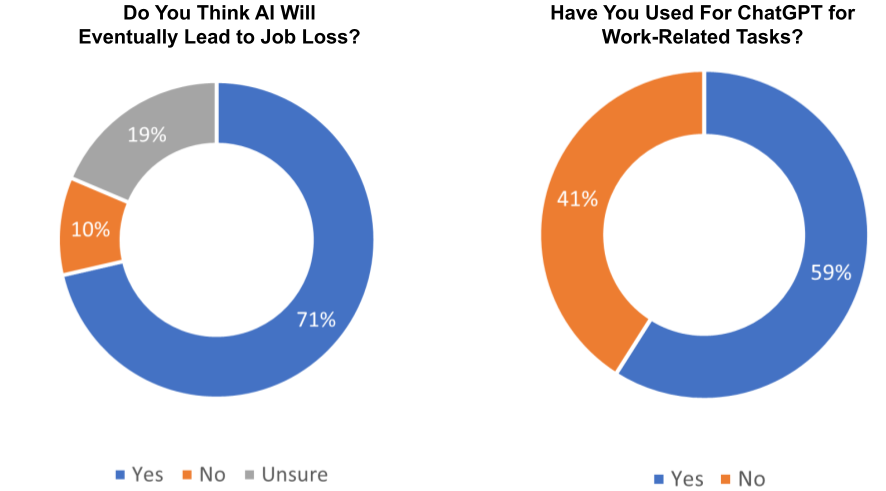

Occam data reveals that 71% of respondents who are familiar with ChatGPT believe that artificial intelligence technology will lead to job loss. We expect this percentage to creep higher as the public begins to understand that AI is already remarkably proficient at handling certain repetitive tasks performed by humans, such as data entry, proofreading, translating, and responding to simple customer service inquiries. Job security fears remain strong, but 59% of ChatGPT users are already using the tool at work, which supports our optimism that over the medium run generative AI will drive meaningful productivity gains among white collar professionals (Figure 4).

Occam data results reveal a disillusionment with AI in a growing minority of respondents. The proportion of people who believe AI will not make them more efficient at work or school has increased from 20% in January to over 30% in March (Figure 5). There have also been numerous media reports about “AI hallucinations,” which refer to instances where AI generates inaccurate or nonsensical outputs that are nonetheless delivered confidently. We believe the increased prevalence of these media stories have fueled skepticism about the trustworthiness of ChatGPT output, as evidenced by occam data showing that the percentage of respondents who distrust AI-generated answers rose from 25% in January to 32% in March (Figure 6).

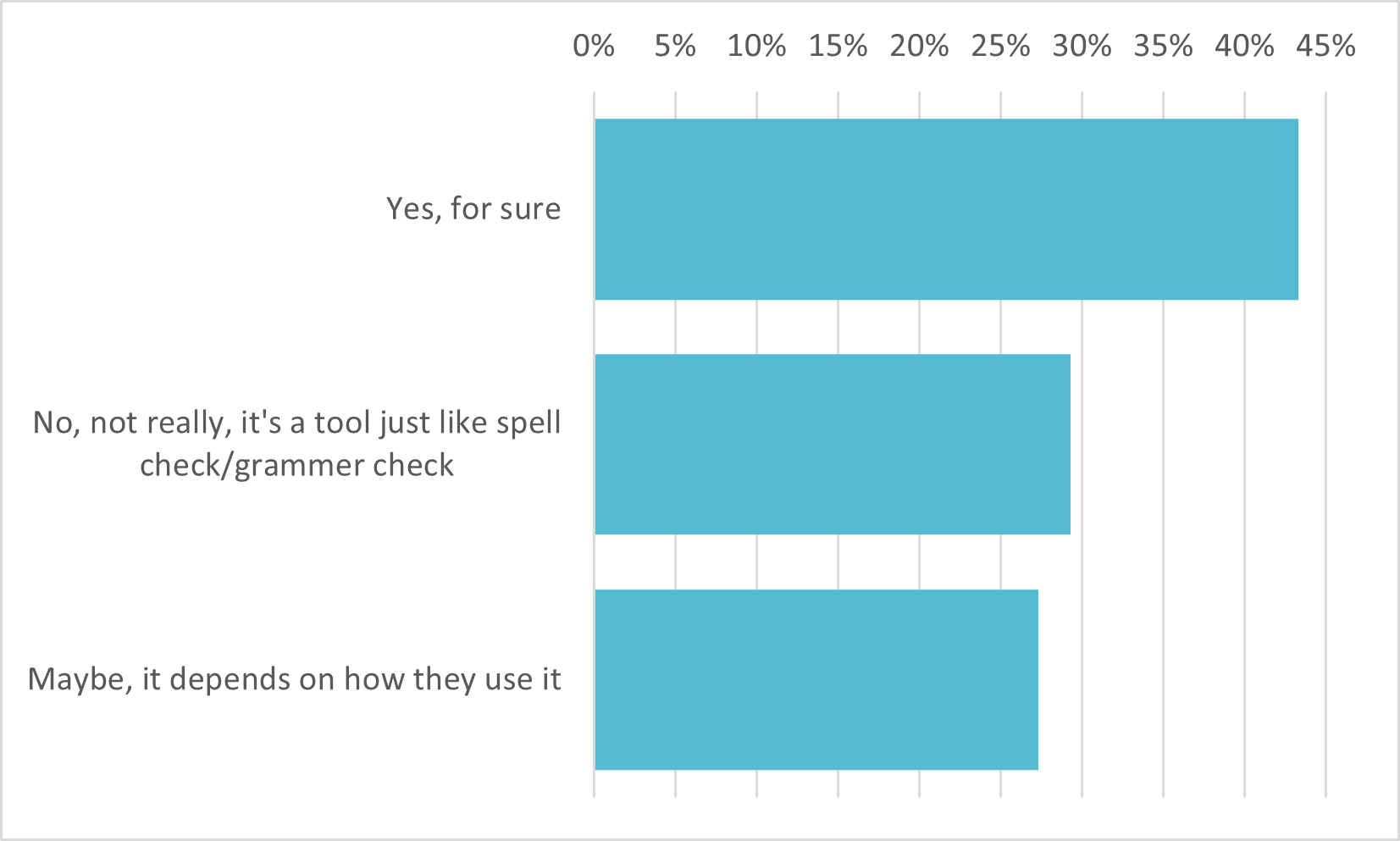

As seen in Figure 7, we posed to our occam respondents the following question: “If someone were to use ChatGPT to assist in writing a college paper, would you consider this cheating?” Almost 45% consider it cheating, 30% view it as a legitimate tool akin to a spell checker or grammar checker, and more than 25% believe the answer depends on the specific manner in which ChatGPT is used. These varying perspectives highlight the need for a broader discussion about the ethical implications of AI technology not only in academic settings, but across a broad range of domains.

Source: Analysis based on occam™ proprietary AI-enhanced research platform with various data sources, including a wide range of questions asked to over 1000 respondents per day with over three years of history. Information is census-balanced and uses occam’s™ proprietary AI algorithm that ensures minimal sampling bias (<1%). Contact us for more info.

ChatGPT Users

Awareness of generative AI tools continues to grow at a rapid pace

ChatGPT Usage

Less than half of ChatGPT users report using the tool more than once a week

ChatGPT Users

Younger cohorts are more likely to have a ChatGPT account

AI in the Workplace

Job security fears remain strong as 59% of ChatGPT users are already using the tool at work

AI and Productivity

The hype for AI's potential is simmering down

Faith in AI

Generative AI users are becoming more aware of its limits

Ethics of AI

There is no clear consensus on ethical applications of ChatGPT

AlphaROC occam case studies are for illustrative purposes only. This material is not intended as a formal research report and should not be relied upon as a basis for making an investment decision. The firm, its employees, data vendors, and advisors may hold positions, including contrary positions, in companies discussed in these reports. It should not be assumed that any investments in securities, companies, sectors, or markets identified and described in these case studies will be profitable. Investors should consult with their advisors to determine the suitability of each investment based on their unique individual situation. Past performance is no guarantee of future results.